What OpenTracy gives you

Drop OpenTracy in where your OpenAI SDK sits today. Every request becomes a trace. Same-intent traces cluster into a dataset. Datasets train a distilled student model that matches your teacher’s output on your traffic. The routing layer swaps the student in under your app through an alias — your code never changes, your cost curve goes down.Install

pip install and you can route on Linux (x86_64 / ARM64), macOS

(Apple Silicon), or Windows (x86_64).

Then jump to the Quickstart.

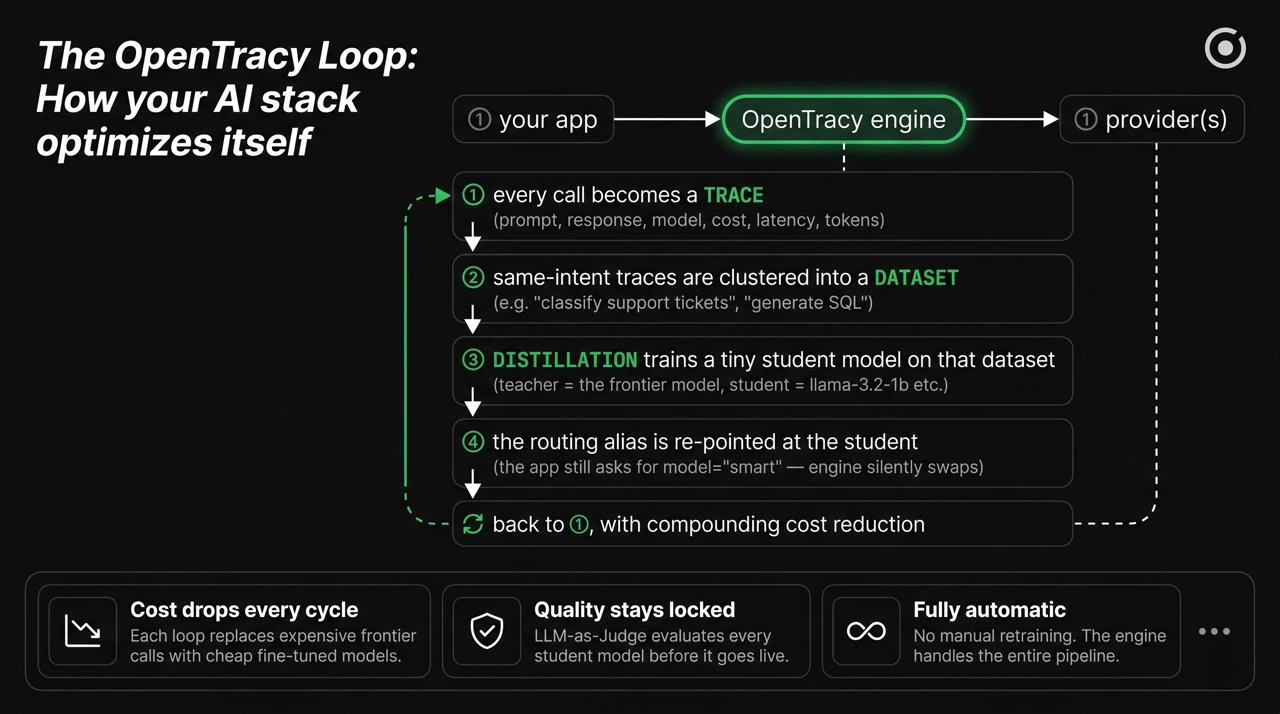

The loop, one step at a time

Your app calls the engine

Point your OpenAI SDK (or any of the 13 providers) at OpenTracy. No code

changes beyond

base_url.Every call becomes a trace

Prompt, response, model, cost, latency, tokens — persisted to ClickHouse

automatically.

Traces cluster into a dataset

Same-intent traces are grouped and labeled (e.g. “classify support tickets”,

“generate SQL”).

A student model gets distilled

The teacher (GPT-4o, Sonnet, …) labels the dataset; a tiny student

(llama-3.2-1b, qwen3-0.6b, …) fine-tunes to match.

Quickstart

Five minutes: install, route your first request, see cost + latency metadata.

Pipeline

The full story — request → trace → dataset → student → alias.

Traces

What we capture per request, where it lives, how it becomes training data.

Distillation

Turn your teacher’s output on your traffic into a cheap custom student.

Why OpenTracy exists

Every team running LLMs in production hits the same wall:- GPT-4o / Claude Sonnet works — but it’s expensive.

- GPT-4o-mini / Haiku is cheap — but quality risk is real on hard prompts.

- Fine-tuning a smaller model is the right answer — but it needs a dataset you don’t have, training infrastructure you don’t want, and a way to actually serve the new model behind your existing code.

How this documentation is organized

Concepts

The “why” — traces, datasets, auto-routing, distillation, and the pipeline that ties them together.

Guides

Task-oriented walkthroughs — drop-in OpenAI replacement, self-hosting, Python SDK.

API Reference

Every function in the Python SDK with arguments, return types, and examples.

GitHub

Source code, issues, and the CI workflow that ships the wheels.